Google’s new AI smart glasses are shaping up to be more productivity-focused than social, and the company’s making it pretty clear it’s not chasing Meta’s Ray-Ban playbook. Google’s December 2025 announcement formally introduced two consumer varieties so far, an audio-only pair and a model with an in-lens display, and lined up Warby Parker and Gentle Monster as the first eyewear partners. Beyond those two confirmed types, early industry briefings have outlined a three-tier roadmap built around productivity, real-world utility, and Gemini AI.

This isn’t another Google Glass moment. The pitch this time is about everyday usefulness: hands-free Gemini help, on-the-go directions, real-time translation, and contextual answers about whatever you’re looking at, all wrapped in frames designed to look like normal eyewear instead of a tech experiment. Here’s what’s actually known so far about Google’s new AI smart glasses, how the three-tier lineup is shaping up, and how it stacks up against Meta’s Ray-Ban push.

The Three Models in Google’s New Lineup

Google’s smart glasses come in three distinct tiers, branded in early industry briefings as the Gemini Audio Frames, the Gemini Display Edition, and Project Aura. The Gemini Audio Frames sit at the entry level, designed to look like normal prescription eyewear and packed with cameras, microphones, and an AI voice assistant for hands-free queries, voice commands, and audio navigation. The Gemini Display Edition steps up to a monocular microLED heads-up display for turn-by-turn directions, real-time notifications, and on-the-go AI responses, positioned for professional and productivity use. Project Aura is the developer-focused kit at the top, with full binocular displays for spatial app development and enterprise use cases.

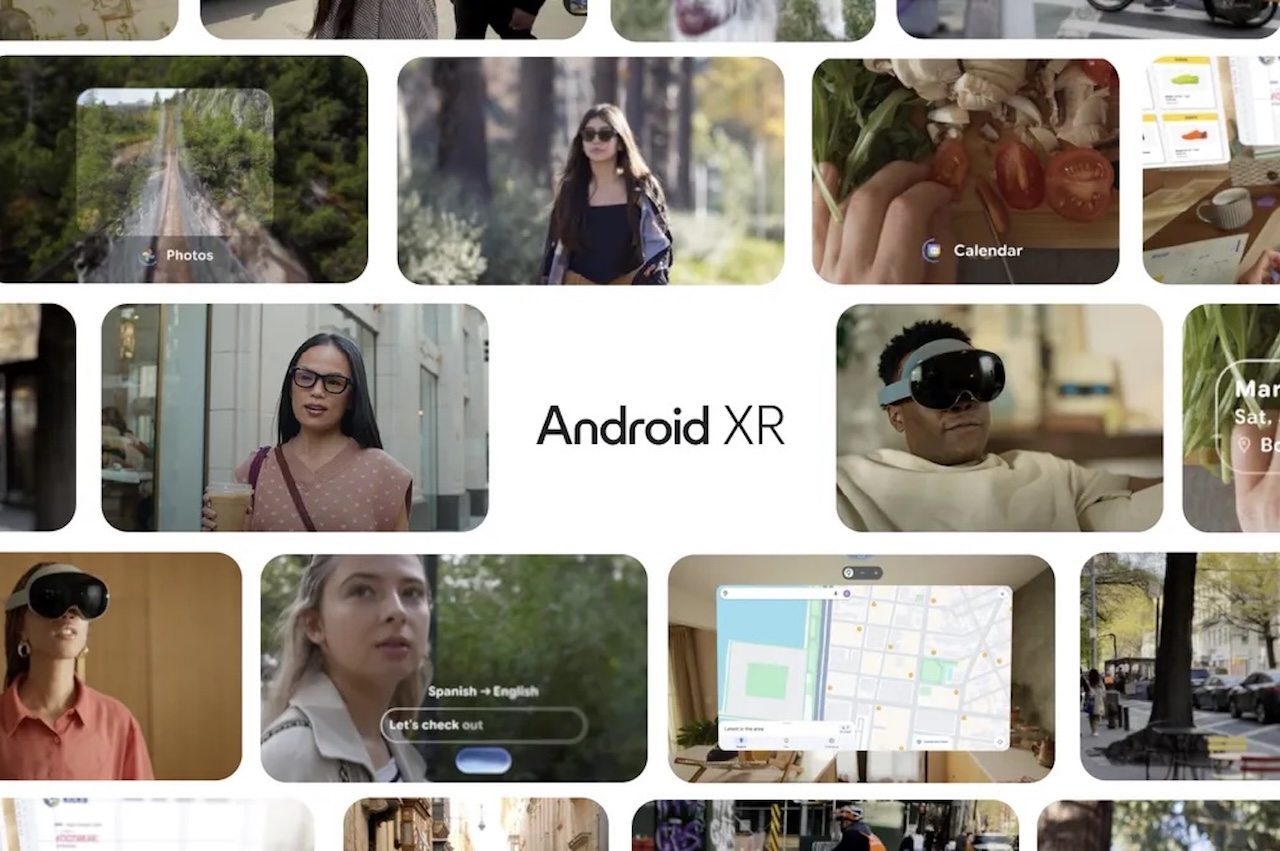

Important context on those names: Google’s December 2025 announcement only formally confirmed two consumer types, an audio-only model and a model with an in-lens display. The Gemini Audio Frames, Gemini Display Edition, and Project Aura tier names come from early industry briefings, not Google’s official communications. Project Aura also sits in a slightly different category from the other two. Google itself classifies it as wired XR glasses (with a tethered Xreal-built puck) rather than AI smart glasses, even though it shares the Android XR and Gemini stack.

How the AI Actually Works

Each model in the Google Gemini smart glasses lineup runs on the Gemini AI system paired with the Project Astra vision system. That combination unlocks real-time object recognition, contextual memory (so the glasses can remember where you left objects), and continuous interaction with whatever you’re looking at. Google’s December 2025 prototype demo showed the same kind of behavior in practice, with Gemini answering contextual questions like whether the produce in front of you is spicy, or whether you should bother reading the rest of a book series you’re scanning on a shelf.

Google is reportedly using a split compute architecture. Heavy AI tasks get offloaded to a paired smartphone or to cloud servers so the frames stay light enough to wear all day. The Nano Banana image editing tool, demoed alongside the December 2025 prototype, lets users edit photos in real time from a spoken command.

The Privacy Pitch This Time Around

Why did Google Glass fail? The original launched in 2013 and was pulled from consumer sale in 2015, with privacy backlash over its always-on camera a major reason. The new prototype tries to head that off with LED indicators that activate when the cameras or microphones are in use, similar to Meta’s Ray-Bans, plus reported sound leakage minimization so audio playback stays private to the wearer.

Google’s senior director of product management for XR, Juston Payne, has said that “glasses can fail based on a lack of social acceptance,” which is why privacy has to sit at the core of the design.

How Google Differentiates From Meta

Meta’s Ray-Bans lean social, with live streaming and content creation as headline use cases. Google’s pitch is the opposite, leaning into productivity through deep ties with Google Maps and the broader Android ecosystem. Google is positioning Gemini as more context-aware than Meta’s Llama-based AI for real-world tasks. Translation: Google’s going after professionals, enterprise users, and the everyday “I need help getting things done” crowd, not the social-first audience.

That positioning lines up with the broader category data. Meta and EssilorLuxottica sold over seven million AI glasses in 2025, more than triple the prior year, and Meta now holds roughly 82 percent of global smart glasses shipments. Demand for Meta’s newest Ray-Ban Display glasses was so strong in the US that Meta paused its planned early 2026 international expansion to the UK, France, Italy, and Canada, citing “unprecedented demand and limited inventory.” Apple is widely expected to enter the category but hasn’t confirmed a product.

The Eyewear Partners Lining Up

Google isn’t building the frames alone. Warby Parker and Gentle Monster are the consumer eyewear partners, with the brand collabs aimed at making the glasses look like normal eyewear instead of a tech helmet. Project Aura, the wired XR glasses Google built with Xreal, is set for 2026 availability and is a 2026 CES Innovation Awards honoree. The headset is wired to an external puck that handles compute and battery duties, which is why Google itself classifies Project Aura as wired XR glasses rather than AI glasses.

The newest addition to Google’s eyewear lineup is Gucci, sitting at the luxury tier. Kering CEO Luca de Meo confirmed the Gucci and Google collaboration on April 16, 2026, with a 2027 launch window indicated. Combined, that gives Google three eyewear brands across price points and style identities, plus Xreal’s wired XR glasses, all running the same Android XR and Gemini stack.

Google AI Smart Glasses Release Date and Pricing

Google still hasn’t shared a hard launch date for the consumer Android XR glasses, though the Google smart glasses 2026 timeline lines up with the company’s December 2025 announcement. Google’s Mobile World Congress demo in March 2026 reiterated that the launch date isn’t locked in yet but confirmed Warby Parker and Gentle Monster as the first eyeglass brands to carry the AI-powered glasses. Pricing has not been officially announced for any tier.

The audio-only frames are the simplest of the lineup and the most likely candidate for an early consumer launch. Project Aura, as the developer kit, will likely show up first for enterprise and developer audiences. The Gucci pair is the longest play, with a 2027 target.

Wrap-Up

The full picture isn’t here yet. Google still needs to confirm pricing, exact launch windows, and the final feature list for each tier of the lineup. What’s already clear, based on the available reporting, is that Google’s smart glasses strategy is broader than Meta’s, more productivity-focused, and aimed at making AI feel like a useful everyday tool rather than a social novelty. We’ll update this post as soon as Google, Xreal, Warby Parker, Gentle Monster, or Kering drops firm pricing and dates.